Bridging Video-text Retrieval with Multiple Choice Questions

Code

Our code and pre-trained model are released in https://github.com/TencentARC/MCQ

Abstract

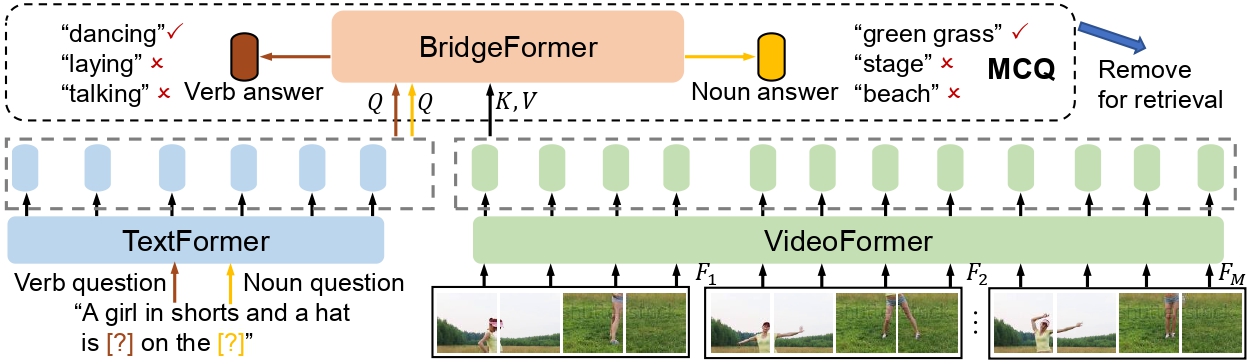

Pre-training a model to learn transferable video-text representation for retrieval has attracted a lot of attention in recent years. Previous dominant works mainly adopt two separate encoders for efficient retrieval, but ignore local associations between videos and texts. Another line of research uses a joint encoder to interact video with texts, but results in low efficiency since each text-video pair needs to be fed into the model. In this work, we enable fine-grained video-text interactions while maintaining high efficiency for retrieval via a novel pretext task, dubbed as Multiple Choice Questions (MCQ), where a parametric module BridgeFormer is trained to answer the “questions” constructed by the text features via resorting to the video features. Specifically, we exploit the rich semantics of text (i.e., nouns and verbs) to build questions, with which the video encoder can be trained to capture more regional content and temporal dynamics. In the form of questions and answers, the semantic associations between local video-text features can be properly established. BridgeFormer is able to be removed for downstream retrieval, rendering an efficient and flexible model with only two encoders. Our method outperforms state-of-the-art methods on the popular text-to-video retrieval task in five datasets with different experimental setups (i.e., zero-shot and fine-tune), including HowTo100M (one million videos). We further conduct zero-shot action recognition, which can be cast as video-to-text retrieval, and our approach also significantly surpasses its counterparts. As an additional benefit, our method achieves competitive results with much shorter pre-training videos on single-modality downstream tasks, e.g., action recognition with linear evaluation.

Results

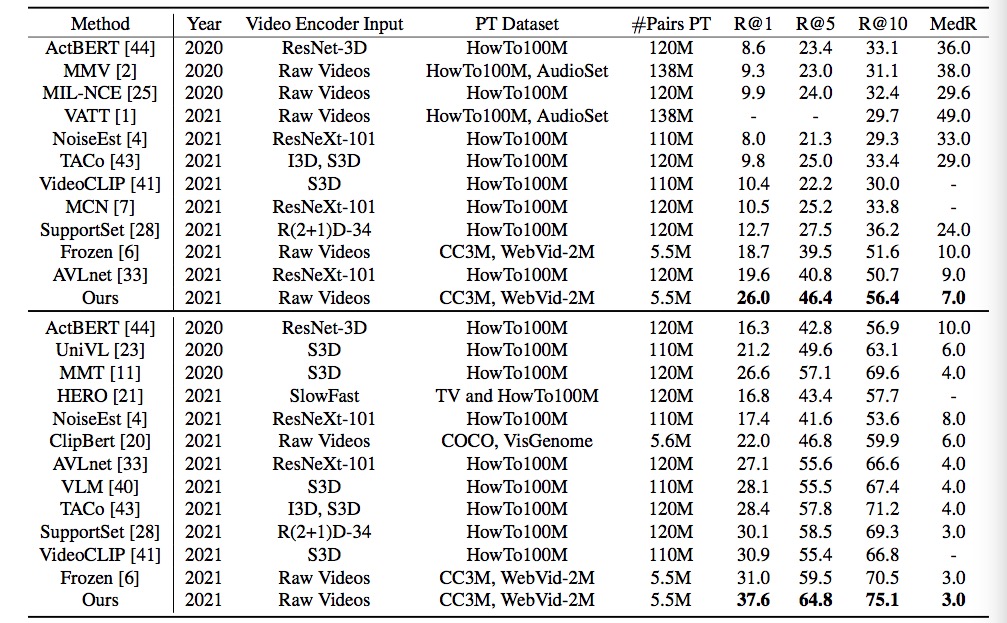

1. Experiments of text-to-video retrieval on MSR-VTT test set with 1K videos.

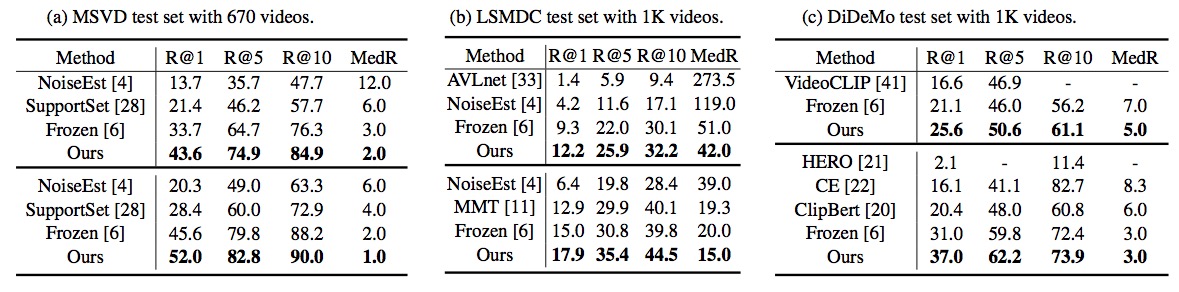

2. Experiments of text-to-video retrieval on different datasets.

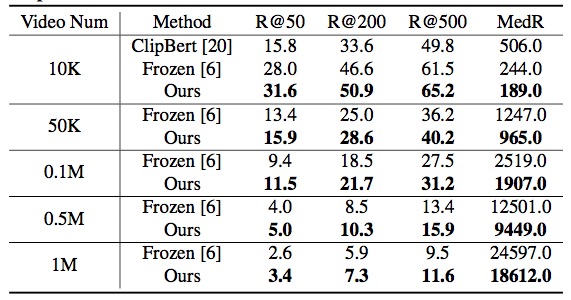

3. Experiments of zero-shot text-to-video retrieval on the large-scale HowTo100M.

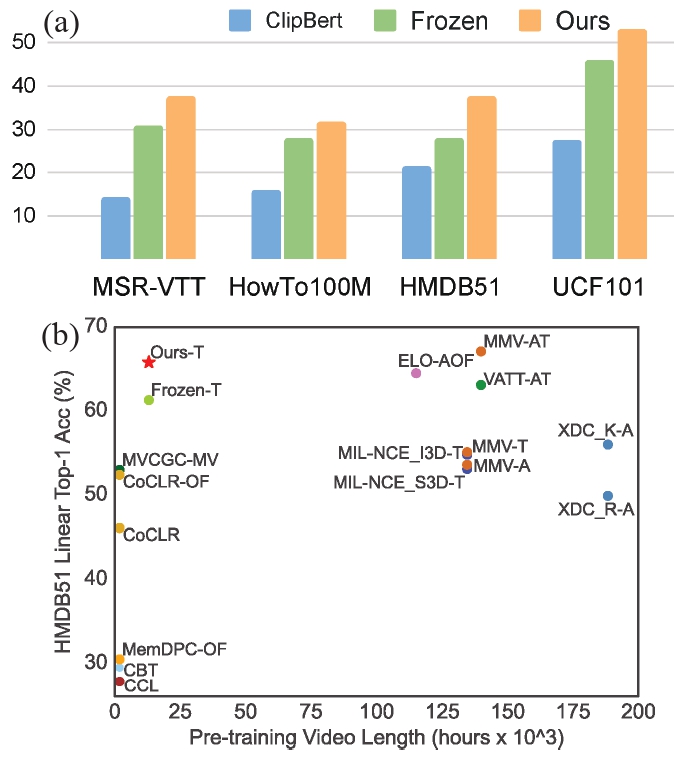

4. Experiments of zero-shot action recognition (a) and action recognition with linear evaluation (b).

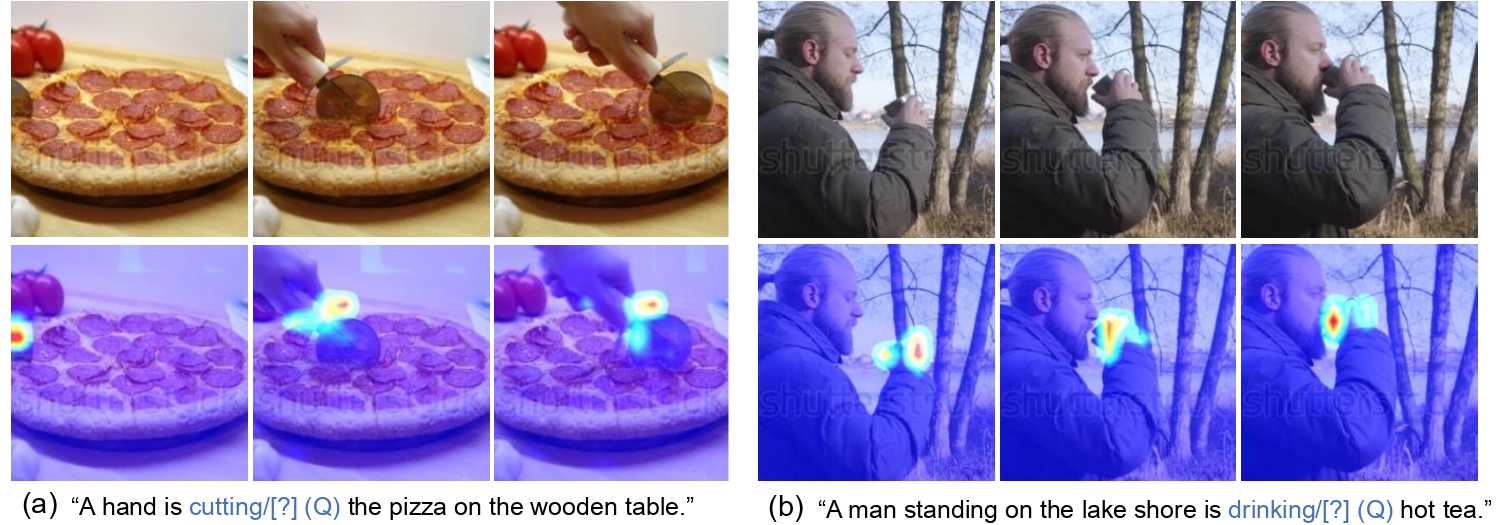

Visualization

1. Cross-modality attention between the text tokens of noun questions and video tokens from BridgeFormer.

2. Cross-modality attention between the text tokens of verb questions and video tokens from BridgeFormer.